Time in Brain and Behaviour Laboratory

Welcome to the Time in Brain and Behaviour Laboratory at the University of Melbourne.

Welcome to the Time in Brain and Behaviour Laboratory at the University of Melbourne, led by Dr Hinze Hogendoorn as Principal Investigator.

The lab investigates time in the brain, from a neural, cognitive, and behavioural perspective. We use computational methods and neuroimaging techniques, particularly multivariate EEG decoding, as well as psychophysical and behavioural approaches, to study how the brain works over time.

We investigate questions such as how time is encoded in the brain, and how the brain keeps track of time. We are especially interested in how the brain solves the computational problems that result from the brain itself needing time to process information. Have a look at our Projects page to see what we are currently working on!

Lab News

Update (27-06-2021): We are recruiting! We have an open postdoc position for someone eager to work on how we perceive the world in real-time. More details here!

Update (20-06-2021): Did the pandemic lockdown mess with your experience of time? Hinze wrote an article for Pursuit about how the COVID-19 pandemic (and lockdown specifically) influenced our sense of time last year. Check it out here.

Update (01-04-2021): We are very excited that the neural modeling project that Tony and Hinze have been working on has just been accepted in Journal of Neuroscience. In this study, we show that spike-timing-dependent plasticity is sufficient to spontaneously cause a layered neural network to learn to extrapolate the position of a moving object, and what's more, the pattern mimics the perceptual flash-lag effect (preprint here).

Update (08-02-2021): Congratulations to Tessel for getting her most recent EEG project accepted in Cortex! In this project, we investigated how quickly predictive motion signals build up during motion processing, and how quickly they are revised when predictions don't come true (spoiler: very quickly!).

Update (21-10-2020): Congratulations Philippa and Sidney for your paper about extrapolation in the High-Phi illusion, accepted today in Journal of Vision! A very cool project, including a whole experiment with psychophysics-from-home due to COVID-19!

Update (05-10-2020): We are very happy to be able to announce that soon-to-be-Dr Will Turner will be joining us in early 2021 as the first TimingLab postdoc! Looking forward to working with you Will!

Update (29-09-2020): I am honoured to be awarded the Young Investigator Award from the Australasian Cognitive Neuroscience Society this year! Come join us at the virtual meeting on Oct 14, 2020.

Update (04-08-2020): I am very grateful to have been awarded an ARC Future Fellowship to continue our work on predicting the present during the coming four years.

Update (01-04-2020): We welcome Tim Cottier and Charlie Sexton as new PhD students in the lab!

Update (17-03-2020): We are very proud of Tessel's latest paper, just out in PNAS, using EEG to show that our brains predict the present!

Update (01-03-2020): We are very happy to welcome Caoimhe Moran as a new PhD student in our lab, co-supervised by Dr. Ayelet Landau as part of a collaboration with the Hebrew University of Jerusalem, Israel

- Dr Hinze Hogendoorn

Principal Investigator

Dr Hinze Hogendoorn

I am a Senior Research Fellow at the Melbourne School of Psychological Science, University of Melbourne, Australia. I lead the Time in Brain and Behaviour Laboratory as Principal Investigator, and I am also Deputy Director of the Decision Sciences Hub.

Previously, I was Assistant Professor in the Department of Experimental Psychology at Utrecht University in the Netherlands, and Fellow of Cognitive Neuroscience at University College Utrecht.

My primary research interests lie in the time-course of visual processing and visual perception. By combining psychophysical, behavioral, computational and neuroimaging techniques, I investigate questions such as how the brain keeps track of time and how the brain functions in real-time. I am currently especially interested in how the brain solves the computational problems that result from its own internal delays.

- Will Turner, PhD

Postdoctoral Researcher

- Tessel Blom, MSc

PhD Student, co-supervised with A/Prof Stefan Bode

- Philippa Johnson, MSc

PhD Student, co-supervised with A/Prof Stefan Bode

- Caoimhe Moran, MSc

PhD Student, co-supervised with A/Prof Ayelet Landau (Hebrew University of Jerusalem)

- Jane Yook

PhD Student, co-supervised with Dr Simone Vossel and Dr Ralph Weidner (Juelich Research Centre)

- Tim Cottier

PhD Student, co-supervised with Prof Tony Burkitt (Department of Biomedical Engineering)

- Charlie Sexton

PhD Student, co-supervised with Prof Tony Burkitt (Department of Biomedical Engineering)

- Ella Wilson

Honours Student

- Milan Andrejevic, PhD

Research Assistant

- Vinay Mepani

Research Assistant

- Mackenzie Murphy

Research Assistant

- Benjamin Lowe

Visiting PhD Student

Collaborators

- Prof. Anthony Burkitt – Biomedical Engineering, University of Melbourne

- Prof. Allison McKendrick – Optometry and Vision Sciences, University of Melbourne

- Dr. Daniel Feuerriegel – Decision Neuroscience Lab, University of Melbourne

- A/Prof Geoff Stuart – University of Melbourne

- A/Prof Thomas Carlson – University of Sydney

- Prof. Frans Verstraten – University of Sydney

- Prof. David Alais – University of Sydney

- Dr. Hamish MacDougal – University of Sydney

- Prof. Patrick Cavanagh – Universite Paris Descartes, France

- Dr. Rufin VanRullen – CNRS Toulouse, France

Lab Alumni

Honours / PhD Students

Jim Maarseveen, MSc (PhD 2020)

Ferron Dearnley (Honours 2020)

Chandelle Piazza (Honours 2020)

Sidney Davies (Honours 2019)

Lysha Lee (Honours 2019)

Dominic Yip (Honours 2018

Duy Dao (Honours 2018)

Kate Coffey (Honours 2018)

Research Assistants / Interns

Elle van Heusden (RA)

Elektra Schubert (Intern)

Adam Ryde (Intern)

Chelsea Liang (Intern)

Ryan Maloney (RA)

Ahmad Al-Dhalaan (Intern)

Joseph Gabriele (Intern)

Nicky Rickerby (RA)

Predicting the Present

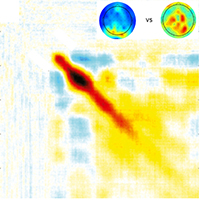

The brain needs time to process sensory input, meaning that our conscious experience of the world is based on information that is outdated by the time we perceive it. One way that the brain might compensate for its internal delays is by prediction. This project uses time-resolved EEG recordings to study the role of anticipatory neural activity in “predicting” the present.

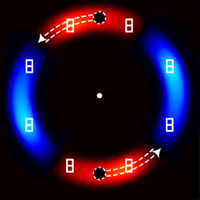

Shortcuts to consciousness

Although the brain needs time to process sensory input, being able to rapidly detect and respond to events in the outside world is very important for any organism's survival. Because in some cases speed is more important than accuracy, our brains have evolved to sometimes 'fast-track' crucial information so that we are able to act on it. To do so, the brain makes assumptions, and when these assumptions are violated, visual illusions result: we see things that are not really happening. This project uses visual illusions such as the flash-lag effect to understand the shortcuts that the brain takes when compensating for its own delays.

When predictions fail

The brain uses predictive strategies to compensate for its own delays, allowing us to "see" objects where they are right now, even if the sensory information about "right now" has not yet been processed. But what happens if events unfold unpredictably, such as when a moving object changes direction? The brain would need time to detect that its prediction was wrong, and during that time it would continue to extrapolate the object along its initial trajectory. What happens to these failed predictions? How does the brain revise its old prediction? This project uses a combination of multivariate EEG decoding and behavioural techniques to understand how the brain corrects its failed predictions.

If you are interested in contributing to any of these projects, or have another idea you would like to discuss, please get in touch!

We gratefully acknowledge funding from the following sources:

Pre-print

Whyte, C.J., Robinson, A.K., Grootswagers, T., Hogendoorn, H., & Carlson, T. A. Decoding Predictions and Violations of Object Position and Category in Time-resolved EEG. bioRxiv 2020.04.08.032888. (link)

2023

Johnson, P.A., Blom, T., van Gaal, S., Feuerriegel, D., Bode, S., & Hogendoorn, H. (2023). Position representations of moving objects align with real-time position in the early visual response. eLife 12:e82424. (pdf)

2022

Hogendoorn, H. (2022). Blurred Lines: Memory, Perception, and Consciousness: Commentary on “Consciousness as a Memory System” by Budson et al (2022). Cognitive and Behavioural Neurology 10.1097/wnn.0000000000000325. (pdf)

Bode, S., Schubert, E., Hogendoorn, H, & Feuerriegel, D.C. (2022). Decoding continuous variables from EEG data using linear support vector regression (SVR) analysis with the Decision Decoding Toolbox (DDTBOX). Frontiers in Neuroscience 16. (pdf)

Hogendoorn, H. (2022). Perception in real-time: predicting the present, reconstructing the past. Trends in Cognitive Sciences 26(2), 128-141. (pdf)

Yook, J., Lee, L., Vossel, S., Weidner, R., & Hogendoorn, H. (2022). Motion extrapolation in the flash-lag effect depends on perceived, rather than physical speed. Vision Research 193:107978. (pdf)

2021

Schubert, E., Rosenblatt, D., Eliby, D., Kashima, Y., Hogendoorn, H., & Bode, S. (2021). Decoding explicit and implicit representations of health and taste attributes of foods in the human brain. Neuropsychologia 162:108045. (pdf)

Burkitt, A.N., & Hogendoorn, H. (2021). Predictive visual motion extrapolation emerges spontaneously and without supervision at each layer of a hierarchical neural network with spike-timing-dependent plasticity. The Journal of Neuroscience 41(20), 4428-4438. (pdf).

Blom, T., Bode, S., & Hogendoorn, H. (2021). The time-course of prediction formation and revision in human visual motion processing. Cortex 138, 191-202. (pdf).

Feuerriegel, D.C., Blom, T., & Hogendoorn, H. (2021). Predictive activation of sensory representations as a source of evidence in perceptual decision-making. Cortex 136, 140-146. (pdf)

Feuerriegel, D.C., Yook, J., Quek, G.L., Hogendoorn, H., & Bode, S. (2021). Visual mismatch responses index surprise signalling but not expectation suppression. Cortex 134, 16-29. (pdf)

2020

Johnson, P.A., Davies, S., & Hogendoorn, H. (2020). Motion extrapolation in the high-phi illusion: analogous but dissociable effects on perceived position and perceived motion. Journal of Vision. (pdf)

Davidson, M.J., Mithen, W., Hogendoorn, H., van Boxtel, J.J.A., & Tsuchiya, N. (2020). The SSVEP tracks attention, not consciousness, during perceptual filling-in. eLife 2020;9:e60031. (pdf)

Desantis, A., Chan Hon Tong, A., Collins, T., Hogendoorn, H., & Cavanagh, P. (2020). Decoding the temporal dynamics of covert spatial attention using multivariate EEG analysis: contributions of raw amplitude and alpha power. Frontiers in Human Neuroscience 10.3389/fnhum.2020.570419. (pdf)

Stuart, G.W., Yip, D., & Hogendoorn, H. (2020). The role of hue in visual search for texture differences: Implications for camouflage design. Vision Research 17, 16-26. (pdf)

Hogendoorn, H. (2020). Motion Extrapolation in Visual Processing: Lessons from 25 Years of Flash-Lag Debate. The Journal of Neuroscience 40(30), 5698–5705. (pdf)

Blom, T., Johnson, P., Feuerriegel, D., Bode, S., & Hogendoorn, H. (2020). Predictions drive neural representations of visual events ahead of incoming sensory information. Proceedings of the National Academy of Sciences USA. DOI 10.1073/pnas.1917777117. (pdf)

2019

Coffey, K., Adamian, N., Blom, T., van Heusden, E., Cavanagh, P., & Hogendoorn, H. (2019). Expecting the unexpected: Temporal expectation increases the flash-grab effect. Journal of Vision 19(9). (pdf)

Hogendoorn, H. & Burkitt, A.N. (2019). Predictive coding with neural transmission delays: a real-time temporal alignment hypothesis. eNeuro 10.1523/ENEURO.0412-18.2019. (pdf).

Maarseveen, J., Paffen, C.L.E., Verstraten, F.A.J., & Hogendoorn, H. (2019). The duration aftereffect does not reflect adaptation to perceived duration. PLOS ONE 14(3): e0213163. (pdf)

Blom, T., Liang, Q., & Hogendoorn, H. (2019). When predictions fail: correction for extrapolation in the flash-grab effect. Journal of Vision 19(3). (pdf)

Van Heusden, E., Harris, A.M., Garrido, M., & Hogendoorn, H. (2019). Predictive coding of visual motion in both monocular and binocular human visual processing. Journal of Vision 19(3). (pdf)

2018

Goddard, E., Klein, C., Solomon, S.G., Hogendoorn, H., & Carlson, T.A. (2018). Interpreting the dimensions of neural feature representations revealed by dimensionality reduction. NeuroImage 180A, 41-67. (pdf)

Van Heusden, E., Rolfs, M., Cavanagh, P., & Hogendoorn, H. (2018). Motion extrapolation for eye movements predicts perceived motion-induced position shifts. Journal of Neuroscience 38(38), 8243-8250. (pdf)

Maarseveen, J., Hogendoorn, H., Verstraten, F.A.J., & Paffen, C.L.E. (2018). Attention Gates the Selective Encoding of Duration. Scientific Reports 8, 2522. (pdf)

Hogendoorn, H., & Burkitt, A.N. (2018). Predictive coding of visual object position ahead of moving objects revealed by time-resolved EEG decoding. NeuroImage 171, 55-61. (pdf)

2017

Maarseveen, J., Paffen, C.L.E., Verstraten, F.A.J. & Hogendoorn, H. (2017). Representing dynamic stimulus information during occlusion. Vision Research 138:40-49. (pdf)

Hogendoorn, H., Alais, D., MacDougall, H., & Verstraten, F.A.J. (2017). Velocity perception in a moving observer. Vision Research 138, 12-17. (pdf)

Fahrenfort, J.J., van Leeuwen, J., Olivers, C., & Hogendoorn, H. (2017). Perceptual Integration without Conscious Access. Proceedings of the National Academy of Sciences USA 114(14), 3744-3749. (pdf)

Maarseveen, J., Hogendoorn, H., Verstraten, F.A.J., & Paffen, C.L.E. (2017). An investigation of the spatial selectivity of the duration after-effect. Vision Research 130, 67-75. (pdf)

Hogendoorn, H., Verstraten, F.A.J., MacDougall, H., & Alais, D. (2017). Vestibular signals of self-motion modulate global motion perception.Vision Research 130, 22-30. (pdf)

2016

Hogendoorn, H. (2016). Voluntary saccadic eye movements ride the attentional rhythm. Journal of Cognitive Neuroscience 28(10), 1625-1635. (pdf)

2015

Hogendoorn, H., Kammers, M.P.M., Haggard, P., & Verstraten, F.A.J. (2015). Self-touch modulates the somatosensory evoked P100. Experimental Brain Research 233(10), 2845-2858. pdf

Hogendoorn, H., Verstraten, F.A.J., & Cavanagh, P. (2015). Strikingly rapid neural basis of motion-induced position shifts revealed by high temporal-resolution EEG pattern classification. Vision Research 113, 1-10. pdf

Hogendoorn, H. (2015). From sensation to perception: Using multivariate classification of visual illusions to identify neural correlates of conscious awareness in space and time. Perception 44, p. 71-78. pdf

Article in Pursuit about time perception during COVID-19 lockdown

Did the pandemic lockdown mess with your experience of time? Hinze wrote an article for Pursuit about how the COVID-19 pandemic (and lockdown specifically) influenced our experience of time last year. (2021). link.

Article in the Conversation about our recent EEG-decoding paper in PNAS

We were invited to write an article for The Conversation about what Tessel's 2020 PNAS paper can tell us about how the brain uses prediction to allow us to function in the present, and how this can lead to us 'seeing' an expected present that never comes to pass. (2020). link.

Explaining prediction on Channel 10's SCOPE

Channel 10's children's program SCOPE visited the lab to discuss how the brain uses prediction to allow us to compensate for its own delays. (2018). link.

Article on prediction in Pursuit Magazine

Pursuit, the University of Melbourne's outreach magazine, wrote an article on our work on prediction on the basis of our 2018 Journal of Neuroscience paper. (2018). link.

Time in the Brain at TEDxUU

Hinze was invited to give a talk about time in the brain at TEDxUtrechtUniversity, an independently organized TED event with theme ‘Change from Within’. The aim of this talk was to make some of his fundamental research accessible for a broad audience. (2016). link

Article in TVOO on Time in the Brain (Dutch)

Hinze was invited to write an article for the Dutch management magazine Tijdschrift voor Ontwikkeling in Organisaties ("Journal for Development in Organisations") about time in the brain, and how our experience of the present moment is actually an illusion. (2015). link.

Episode on time in Dutch popular science TV show 'Labyrinth'

Hinze was invited to contribute to the episode "De dimensie tijd (the time dimension)" on the Dutch national television program VPRO Labyrinth. (2013). link.

Dr. Hinze Hogendoorn

Redmond Barry Building, Room 806

Melbourne University

Parkville, VIC 3010, Australia

Tel: +61 (0)3 8344 3895

Email: hhogendoorn@unimelb.edu.au

Lab website -- Staff website

Twitter: @hinzehogendoorn